Ad Creative Fatigue Is Happening Faster Than You Think — Here's the Data

The digital advertising landscape of 2026 is a paradox. On one hand, brand managers and ad ops teams have access to unprecedented AI-driven targeting, predictive analytics, and automated bidding algorithms that can scale campaigns…

The digital advertising landscape of 2026 is a paradox. On one hand, brand managers and ad ops teams have access to unprecedented AI-driven targeting, predictive analytics, and automated bidding algorithms that can scale campaigns globally in milliseconds. On the other hand, this same hyper-automated ecosystem has created a minefield of unpredictable content, ranging from AI-generated misinformation and deepfakes to polarizing user-generated videos. In this environment, protecting your brand's reputation is no longer a passive exercise—it is an active, daily battle.

For marketing directors and brand managers, the stakes have never been higher. A single ad placed next to toxic, offensive, or controversial content can trigger immediate consumer backlash, viral boycotts, and a measurable drop in shareholder value. Brand safety is no longer just a checkbox on a media plan; it is a critical pillar of your overarching corporate strategy.

In this comprehensive guide, we will explore what brand safety truly means in 2026, the real financial costs of unsafe ad placements, how contextual targeting is evolving to protect advertisers, and the platform-specific controls you need to master. We will also look at how integrating built-in compliance tools, like those offered by HawtAds, can safeguard your ad spend from the very first creative iteration.

What Brand Safety Actually Means in 2026

Historically, brand safety was a relatively straightforward concept. In the early days of programmatic advertising, it simply meant keeping your display ads away from the "Dirty Dozen"—categories like adult content, illegal drugs, hate speech, terrorism, and piracy. Advertisers relied on rudimentary keyword blocklists and domain exclusion lists to keep their brands out of trouble.

In 2026, the definition has fractured and evolved into two distinct but overlapping categories: Brand Safety and Brand Suitability.

- Brand Safety: This remains the universal baseline. It encompasses the universally agreed-upon categories of content that are inherently harmful or illegal. No legitimate brand wants their ads funding terrorist propaganda, child exploitation, or blatant hate speech. This is the non-negotiable floor of digital advertising.

- Brand Suitability: This is where the modern challenge lies. Suitability is highly subjective and customized to the specific values, risk tolerance, and identity of an individual brand. For example, a hard-hitting documentary about climate change might be a perfectly suitable environment for an outdoor apparel brand, but a highly unsuitable environment for a legacy airline or fossil fuel company.

The 2026 ecosystem introduces new layers of complexity to these definitions. The explosion of Generative AI has led to a flood of "Made for Advertising" (MFA) websites—low-quality, AI-generated content farms designed solely to arbitrage ad traffic. Furthermore, hyper-realistic deepfakes and AI-generated political misinformation require ad ops teams to deploy sophisticated semantic analysis tools just to understand the context of the page they are bidding on.

The Hidden (and Explicit) Cost of Unsafe Placements

The financial implications of ignoring brand safety are staggering. When your ads appear alongside toxic content, you are not just wasting impressions; you are actively funding the degradation of your own brand equity.

Let's look at the numbers. According to 2026 industry analytics, global spend on brand safety, suitability, and verification tools is projected to surpass $4.2 billion this year alone. Advertisers are willingly paying this premium because the cost of a brand safety crisis far outweighs the cost of prevention.

The costs manifest in several damaging ways:

- Direct Wasted Ad Spend: Every dollar spent on an impression served on an MFA site or next to a highly toxic video is a dollar that yields zero return on investment. MFA sites, in particular, are notorious for high viewability metrics but zero actual human engagement, artificially inflating your campaign metrics while draining your budget.

- The "Guilt by Association" Effect: In the eyes of the modern consumer, adjacency equals endorsement. If a user sees your pre-roll ad playing before a video promoting dangerous conspiracy theories, they subconsciously link your brand to those ideologies. Studies in consumer psychology show that it takes up to five positive brand interactions to undo the damage of one severe negative association.

- Public Relations Crisis Management: When a brand safety failure goes viral on social media, the subsequent PR crisis requires massive capital to manage. Marketing directors are forced to pause entire programmatic campaigns, hire crisis communication firms, and issue public apologies—costing hundreds of thousands of dollars in lost momentum and direct fees.

- Decreased Customer Lifetime Value (CLV): Trust is the currency of the 2026 digital economy. Consumers are highly value-driven, and a brand safety misstep can lead to immediate churn, particularly among Gen Z and Millennial cohorts who heavily scrutinize the ethical footprint of the brands they support.

The Resurgence of Contextual Targeting

With the final deprecation of third-party cookies and the tightening of global privacy regulations (such as the evolution of GDPR and CCPA), the advertising industry has experienced a massive pivot back to contextual targeting. However, this is not the keyword-matching contextual targeting of the 2010s. In 2026, contextual targeting is powered by advanced Artificial Intelligence, Natural Language Processing (NLP), and computer vision.

Contextual targeting analyzes the actual content of a webpage, video, or audio stream to determine its meaning, sentiment, and emotion before an ad is served. This makes it a powerful, privacy-compliant weapon in the brand safety arsenal.

Modern contextual AI doesn't just read the text on a page; it understands the nuance. For example, the word "shoot" could appear in an article about a tragic mass shooting (unsafe), an article about a basketball game (safe), or an article about a photography session (safe). Legacy keyword blocklists would blindly block all three, severely limiting an advertiser's reach and artificially inflating CPMs. 2026 contextual AI understands the semantic difference, blocking the tragic news story while allowing the ad to serve on the sports and photography pages.

Furthermore, computer vision technology now analyzes video content frame-by-frame. It can detect logos, facial expressions, actions, and even on-screen text in real-time, ensuring that your video ads only run in environments that align with your brand's specific suitability guidelines.

Platform-Specific Brand Safety Controls: A 2026 Guide

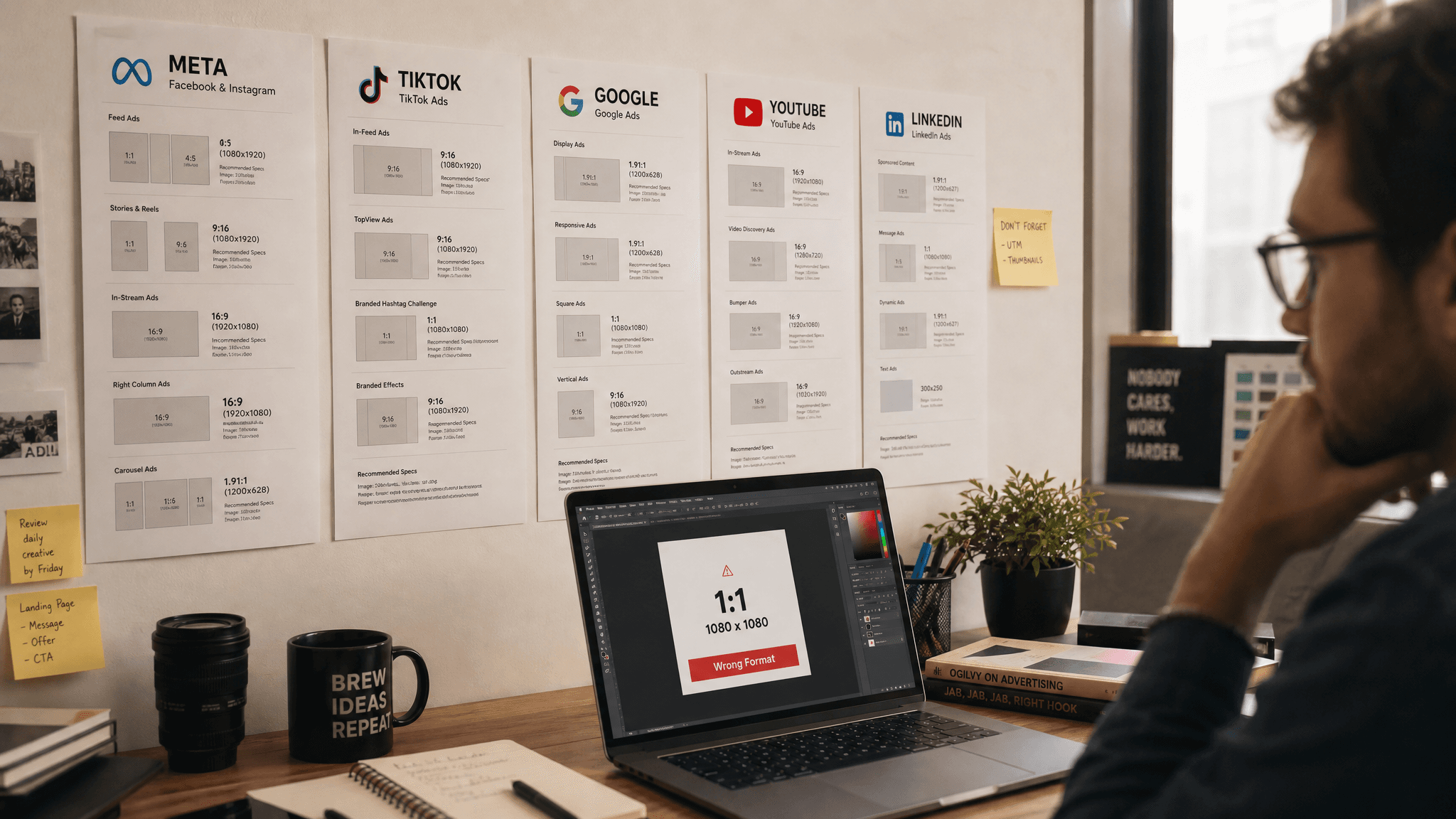

While third-party tools are essential, protecting your ad spend starts natively within the platforms where you buy media. The major walled gardens have significantly upgraded their brand safety controls in response to advertiser demands. Ad ops teams must be intimately familiar with the specific levers available on Meta, Google, and TikTok.

Meta (Facebook & Instagram)

Meta has continuously refined its Brand Safety and Suitability Center. In 2026, advertisers have granular control over where their ads appear across the Facebook Feed, Instagram Reels, and the Meta Audience Network.

- Inventory Filters: Meta offers three tiers of inventory filters: Expanded, Standard, and Limited. Most brands default to Standard, which blocks universally offensive content. Brands with strict risk tolerances should utilize the Limited inventory filter, which applies maximum restrictions on sensitive topics like debated social issues and tragedy.

- Blocklists: Advertisers can upload lists of specific publishers, app URLs, and Facebook Pages where they refuse to show ads. In 2026, maintaining dynamic, frequently updated blocklists is a core responsibility of any ad ops team.

- Content-Based Controls: Meta's AI now allows advertisers to exclude specific topics at the post level, particularly within Reels, ensuring ads don't serve immediately following a user-generated video about a controversial political topic.

Google (Google Ads & YouTube)

As the largest digital advertising ecosystem, Google offers robust controls, particularly for YouTube, which has historically been a challenging environment for brand safety due to the sheer volume of user-generated content.

- Content Suitability Settings: Similar to Meta, Google provides Expanded, Standard, and Limited inventory tiers. This applies across YouTube and the Google Display Network (GDN).

- Dynamic Exclusion Lists: Google allows advertisers to subscribe to dynamic exclusion lists managed by trusted third parties. As these third parties identify new MFA sites or toxic channels, your campaigns automatically stop bidding on them, providing real-time protection without manual updates.

- YouTube Specifics: Advertisers can exclude specific content labels (e.g., DL-MA for mature audiences), content types (e.g., live streaming, embedded YouTube videos), and specific sensitive categories (e.g., tragedy and conflict, sensitive social issues).

TikTok

TikTok's explosive growth brought unique brand safety challenges, primarily driven by the unpredictable nature of viral trends and the platform's hyper-personalized "For You" feed. TikTok has responded with aggressive safety infrastructure.

- TikTok Inventory Filter: Aligned with the Global Alliance for Responsible Media (GARM) framework, TikTok allows advertisers to choose between Full, Standard, and Limited inventory. The Limited tier ensures ads are placed adjacent to content that carries the lowest risk of brand safety violations.

- Category Exclusions: Advertisers can exclude their ads from appearing next to specific categories of content, such as gaming, beauty, or entertainment, if those categories conflict with their brand identity.

- Pre-Bid and Post-Bid Integrations: TikTok has deeply integrated with major third-party verification partners, allowing ad ops teams to apply their custom suitability profiles directly to their TikTok ad buys.

The Role of Third-Party Verification Tools

While platform-specific controls are necessary, relying solely on them violates a fundamental rule of digital advertising: never let the platforms grade their own homework. The walled gardens have an inherent financial incentive to maximize available inventory, which can sometimes conflict with an advertiser's desire for strict safety.

This is why third-party verification partners—such as Integral Ad Science (IAS), DoubleVerify (DV), and Zefr—are indispensable in 2026. These platforms act as independent auditors, providing impartial measurement and blocking capabilities.

Third-party tools operate on two main fronts:

- Pre-Bid Avoidance: This is the most cost-effective strategy. Verification tools integrate directly with your Demand Side Platform (DSP). Before a bid is even placed, the tool analyzes the URL or video content. If it violates your brand's custom suitability profile, the bid is blocked, saving your budget from being wasted on an unsafe impression.

- Post-Bid Blocking and Measurement: If an unsafe impression slips through the cracks, post-bid blocking prevents the ad creative from actually rendering on the page, serving a blank pixel instead. While you still pay for the impression, you protect your brand from the negative adjacency. The tool then logs the violation, allowing your ad ops team to optimize future bidding.

How to Build an Ironclad Brand Safety Policy

Technology alone cannot solve brand safety. Protecting your ad spend requires a formalized, cross-departmental strategy. Marketing directors must lead the charge in establishing a clear brand safety policy that guides the ad ops team's daily execution. Here is a step-by-step framework for building a robust policy in 2026:

- Define Your Brand's Risk Tolerance: Gather stakeholders from marketing, PR, legal, and executive leadership. Have an honest conversation about what your brand stands for and what it cannot tolerate. A disruptive, edgy streetwear brand will have a vastly different risk tolerance than a pediatric healthcare provider. Document these boundaries clearly.

- Map Suitability to the GARM Framework: The Global Alliance for Responsible Media provides a standardized framework for categorizing content risk (Low, Medium, High, Floor). Use this framework to translate your subjective brand values into objective, actionable categories that your agency and ad ops teams can implement.

- Establish Dynamic Allowlists and Blocklists: Move away from static spreadsheets. An allowlist (a list of pre-approved, highly trusted publishers) is the safest approach for high-risk brands, though it limits scale. A blocklist (domains to avoid) maximizes scale but carries more risk. In 2026, you should utilize dynamic lists that update automatically via API based on continuous AI scanning of the web.

- Implement an MFA Strategy: Made for Advertising sites are the silent killers of ROI. Explicitly state in your policy how your team will identify and exclude MFA inventory. Demand transparency from your programmatic partners regarding the percentage of your budget currently bleeding into MFA domains.

- Create an Incident Response Plan: Assume that a breach will eventually happen. Outline exactly what steps must be taken if an ad appears next to toxic content. Who has the authority to pause the campaign? Who drafts the external communications? Having a plan in place turns a potential PR disaster into a swiftly managed operational hiccup.

How HawtAds Ensures Brand-Safe Ad Creation at Scale

While much of the brand safety conversation focuses on where the ad is placed, true protection begins with what the ad contains. In the rush to produce high volumes of creative assets for hyper-personalized campaigns, brands often inadvertently generate ad copy or visuals that violate platform policies or their own brand guidelines.

This is where HawtAds fundamentally changes the workflow for brand managers and ad ops teams. HawtAds is not just a platform for scaling ad creation; it is engineered with built-in compliance and brand safety controls at its core.

When you use HawtAds to generate your campaign creatives, the platform's intelligent algorithms cross-reference your outputs against up-to-date platform policies (Meta, Google, TikTok) and your own custom brand guidelines. This ensures that the messaging, imagery, and tone are inherently compliant before they ever reach your DSP or social ad manager. By integrating HawtAds into your workflow, you eliminate the risk of ad rejections, account suspensions, and off-brand messaging, allowing your team to scale campaigns rapidly with total peace of mind.

Conclusion: Brand Safety as a Competitive Advantage

As we navigate the complexities of 2026, brand safety can no longer be viewed merely as a defensive tactic or an operational tax. It is a strategic imperative. Brands that successfully protect their digital environments build deeper trust with their consumers, achieve higher returns on their ad spend by eliminating MFA waste, and safeguard their long-term equity.

By understanding the nuances of contextual targeting, mastering platform-specific controls, leveraging third-party verification, and utilizing compliant creative platforms like HawtAds, marketing directors and ad ops teams can transform brand safety from a source of anxiety into a powerful competitive advantage.